Preorder Your 2U Liquid-Cooled AI Server With 8× NVIDIA Rubin GPU Allocations

Ultra-dense, liquid-cooled AI compute built for frontier-scale training and inference. Limited production slots available.

.avif)

.png)

Architectural Breakthrough: Rubin NVL8

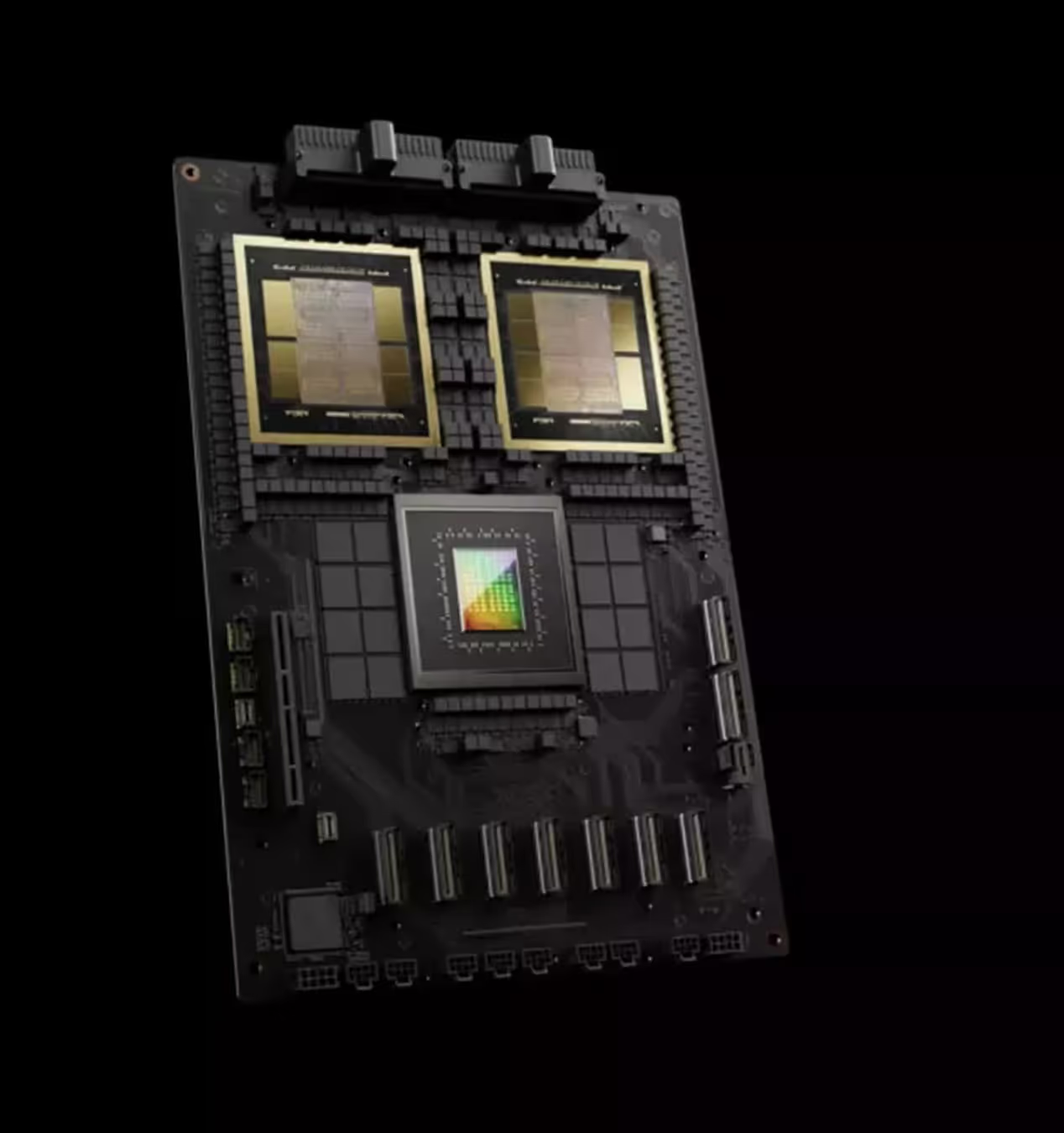

The NVIDIA Rubin NVL8 platform represents a fundamental rethinking of AI server architecture. Instead of treating GPUs, networking, memory, and power as loosely coupled components, NVL8 unifies them into a single, purpose-built compute fabric. The result is an ultra-dense 2U system that delivers hyperscale-class performance in a fraction of the physical footprint.

Rubin NVL8 Unified Compute Fabric

Collapses GPU, networking, memory, and power into a single, purpose-built AI plane—eliminating rack-level bottlenecks and enabling true node-scale supercomputing in 2U.

Native 8-GPU HGX Baseboard

Delivers extreme parallelism with direct GPU-to-GPU pathways, reducing latency and unlocking linear scaling for frontier training and inference workloads.

Integrated 800G Networking Plane

QCT's new air-cooled powerhouse, perfect for demanding data centers.

Direct-to-Chip Liquid Cooling Architecture

Maintains sustained peak performance under full load, removing thermal ceilings that limit air-cooled systems and enabling continuous high-density compute.

54V DC Power Backbone (Up to 24kW)

Purpose-built for hyperscale energy delivery in a single chassis, supporting next-generation GPUs without compromise or derating.

Gen-6 Intel® Xeon Control Plane

Anchors the NVL8 fabric with high-wattage, high-bandwidth CPUs optimized for orchestration, data movement, and pipeline control—ensuring GPUs operate at full utilization without host-side contention.

System Configuration & Platform Specs

The Rubin NVL8 platform is engineered as a complete, production-grade AI node—ready for rack-scale deployment from day one.

At its core is the NVIDIA NVL8 HGX baseboard, purpose-built for extreme parallelism and sustained performance under full load.

Core Platform

- 8× NVIDIA Rubin GPUs via NVL8 HGX baseboard

- Dual Gen-6 Intel® Xeon® CPUs (up to 350W each)

- Up to 32× DDR5 DIMMs (6400 MHz)

- Fully direct-to-chip liquid cooling

- 54V DC busbar architecture Supporting up to 24kW

Networking

- East–West Fabric: 8× OSFP ports from onboard CX9

InfiniBand XDR / Ethernet 800G - North–South Expansion: 1× PCIe Gen6 x16 FH3/4L slot

BlueField-4 DPU ready

Storage

- Data: 8× hot-swap E1.S NVMe SSDs

- Boot: 2× PCIe M.2 2280

Physical

- Form Factor: 2U rack mount

- Dimensions: 448 × 87 × 800 mm

Built For Frontier Workloads

Rubin NVL8 is designed for organizations operating at the edge of what today’s infrastructure can support. This platform exists for teams that cannot afford thermal throttling, interconnect bottlenecks, or unpredictable scaling limits.

Frontier Model Training & Large-Scale Inference Platforms

Train trillion-parameter models with fewer nodes, lower latency, and sustained peak throughput across long-running jobs. Also, powers real-time, high-volume inference with deterministic performance and rack-level efficiency.

Scientific & HPC Computing

Enable dense, high-bandwidth compute for climate modeling, genomics, physics simulations, and national research workloads.

Expert Configuration and Deployment Support

We ensure your AI infrastructure is perfectly matched to your workloads, with comprehensive support from planning through production deployment.

Reserve Your Rubin Allocation

The Rubin NVL8 platform is entering production against fixed global capacity. Once this window closes, subsequent systems move into later manufacturing cycles.

This preorder secures:

- A 2U liquid-cooled NVL8 system

- 8× NVIDIA Rubin GPU allocations

- Priority placement in the September 2026 production run